When You Must Buy Versus Build, There Are Ways To Help You Avoid Any Slip-ups

More and more projects involve integration of custom-developed or commercial-off-the-shelf (COTS) components, rather than in-house development or enhancement of software. In effect, these two approaches constitute direct or indirect outsourcing of some or all of the development work for a system, respectively.

While some project managers see such outsourcing of development as reducing the overall risk, each integrated component can bring with it significantly increased risks to system quality. If your organization does or is planning to outsource, you’ll need to understand the factors that lead to these risks, and some strategies you can use to manage them.

I’ll illustrate the factors and the strategies with a hypothetical project. In this project, assume you’re the project manager for a bank that is creating a Web application that allows homeowners to apply for a home equity loan.

You’ve purchased components from two suppliers, including a COTS database management system from one of them. You’ll hire an outsourced custom development organization to develop the Web pages, the business logic on the servers, and the database schemas and commands to manage the data.

First, let’s analyze how to recognize the factors that create quality risks, and identify strategies you can use to manage those risks.

Quality Risk Factors in Integration

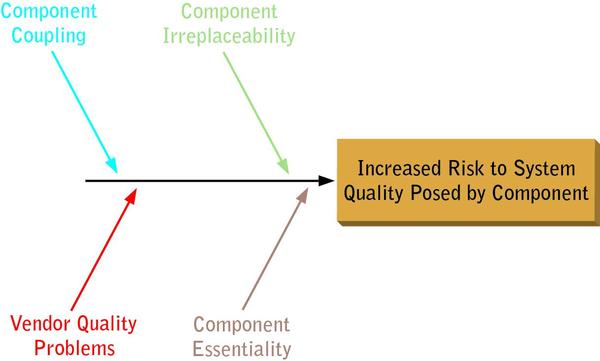

Figure 1 (below) shows four factors that lead to increased quality risk for a system. Let’s take a look at each, one at a time.

FIGURE 1: THE FOUR CORNERS OF QUALITY RISK

One factor that increases quality risk is component coupling, which creates a strong interaction with the system—or consequence to the system—when the component fails.

For example, suppose the customer table on the Web application database becomes locked and inaccessible under normal load. In such a case, most of the other components of the system, being unable to access customer information, also would fail. The database is strongly coupled to the rest of the system.

Another factor that increases risk is irreplaceability. This occurs when few similar components are available or the replacement is costly or requires a long lead time.

If such a component creates quality problems for your system, you’re stuck with them. For example, the database package you choose might be replaceable, provided that you don’t do anything non-standard with it.

However, the development organization will want to be paid for the custom-developed Web application. And should you choose to try to replace it, off-the-shelf products might not exist.

Yet another factor that increases risk is essentiality, where some key feature or features of the system will be unavailable if a certain component doesn’t work properly.

For example, suppose you planned to include a pop-up loan planner on the first page of your application to allow customers to evaluate various payment scenarios. If that component failed, you could still deliver most of your application’s major features, since the planner is not essential to the system.

But if the subsystem that accesses a credit bureau to check customer credit scores doesn’t work, you can’t process loan applications. Checking credit scores is essential to the application.

The final factor that increases risk entails vendor quality problems. This factor can be compounded if it’s accompanied by slow turnaround on bug fixes when problems are reported.

If there’s a high likelihood of the vendor sending you a bad component, the level of risk to the quality of the entire system is higher.

For example, if you buy a commercial database from a reputable, established vendor, or if you select a custom development organization with a proven track record, then you’ll probably have fewer problems.

If you use a new open source database that has never been used in commercial applications before, or if you use a newly open custom development organization, you’ll probably have more problems, particularly if there is poor technical support or if it’s absent altogether.

It’s obvious how these factors could affect a typical data center application. Imagine a weapons system for which defense contractors intend to develop software to run on COTS platforms. Here the situation is similar, though the replaceability and vendor quality problems could be exacerbated by

limited choices for components and vendors.

How might these risks be mitigated? In my experience, I’ve seen and used four effective strategies.

Trust Your Vendor

One strategy is simply to trust the vendor’s component quality and testing, and assume they’ll deliver a sufficiently good, more-or-less working component to you. This approach may sound naïve on its face, but project teams do it all the time. If you choose this course, I suggest you do so with your eyes open. Understand the risks you’re accepting. Allocate time and finances as a contingency for poor component quality. The more coupled, essential and irreplaceable the component, the greater the impact of such a situation.

To continue with our example, you might choose to trust both the custom development organization and the database vendor. You could make such a decision rationally by checking the development organization’s references, assuming they can provide references for customers who used them for projects that are very similar to yours in design and scale.

The same is true for the database vendor, though you might have to do your own research if their sales and marketing staff cannot or will not provide references.

Relying solely on an acceptance test is practically the same as trusting your partners in the custom development situation. For the COTS database, you could run an acceptance test at the beginning of the project for the database, using simple models to evaluate database performance, reliability and data quality under your intended load conditions.

However, for the custom-developed component, you’ll have to wait until you receive the component before you can acceptance-test it. And if the component fails, what options do you have?

Even if the contract stipulates that you don’t have to pay under these circumstances, you face a good chance of a lawsuit, and you also have the actual (and opportunity) costs of starting over with a new custom development organization.

Manage Your Vendor

Another strategy is to integrate, track and manage the vendor testing of their component as part of an overall, distributed test effort for the system. This involves up-front planning, along with sufficient clout with the vendor to insist that they consider their test teams and test efforts subordinate to (and contained within) yours.

To continue with our hypothetical project, imagine that you’re working at a large bank and that the custom development organization is a small firm. They’ll probably be motivated to get and retain your business. They’ll be especially flexible if they think that you have particularly good testing processes and that they can learn something from you.

In exchange for the effort you expend managing their testing, you’ll have early warning should quality problems emerge, and therefore more options to deal with such an outcome.

Conversely, though, if you’re buying the database from a large COTS vendor, they probably see your business as a small part of their larger product sales picture. They have their own test processes, product road map and target release dates. It’s highly unlikely that they’ll be receptive to offers—much less insistence—that you manage their testing operation.

Even smaller COTS vendors, when selling a COTS component, want to sell you what they’re offering. They’ll likely be averse to the possibility of an open-ended situation under which you might redefine the component’s requirements through expansive testing and ambiguous or evolving pass/fail criteria for the tests. I’ve seen more than one COTS vendor get burned by customers when they allowed this to happen.

Smart COTS vendors (large or small) would probably insist that this management of their testing, and any resulting bug fixes and change requests, be considered a customization of their component subject to time-and-materials billing.

The only likely exceptions to such a condition would arise when the COTS vendor saw a strong possibility that working with you to fix problems and change the product would benefit their current or future customers sufficiently to justify the risks they’d be taking.

Fix Your Vendor

Another option is to fix the component vendor’s testing or quality problems. In other words, you go into the situation expecting to either revamp the vendor’s processes or build new processes for them from scratch. Both sides must expect that substantial effort, including product modifications, will result. Once again, a key assumption is that you have the clout to insist that you be allowed to go in and straighten out what’s broken in their test and quality processes.

This might sound daunting, but on one project the client hired me to do exactly that, and it worked out well. The vendor was compensated for their part of the work, including the modifications. And my client felt that the vendor brought enough technical innovation and capability to the project to justify their management of the quality and testing problems. With expectations aligned from the start, both sides were happy.

Going back to our example, suppose you assess the outsource development organization before the project and find their testing and quality processes lacking. They accept your assessment. You offer to help them fix the issues that were identified, and they accept that offer. If your assessment identified the major problems, and if you and the vendor can resolve those problems with the scope, budget and schedule for the project, and if continuing to work with that vendor makes sense for other reasons, this can succeed.

However, it’s difficult to imagine that the database vendor would accede to the request for an assessment of their testing to begin with, not to mention allowing you to come in and implement changes to it. The very fact that a COTS vendor might agree to such a request should set off alarm bells in your mind. You should then ask yourself if they actually have a COTS product to sell or if you’re dealing with a prototype masquerading as a product.

Test Your Vendor’s Component

A final option, especially if you have proof of incompetent testing by the vendor, is to disregard their testing, assume that the component is coming to you untested, and retest the component. You’ll have to allocate time and effort for this, and realize that the vendor will likely push to have every bug report you submit reclassified as a change request except in the most egregious cases. You also have to ask yourself if the vendor might decide, at some point, to cut their losses and disengage from the project. You’ll want to make sure you have contingency plans in place should that happen.

I’ve had to do this for clients on system testing projects. On one notable project, a vendor sold my client a mail server component that was seriously buggy. We became aware of the problems by a series of misadventures in which promised deliverables continued to show up late and with substantive bugs, as well as fit-and-finish problems that gradually eroded our confidence in them.

Eventually, the component did work and was included in the system, but the entire process took a few months, not the one-week deliver-and-integrate that was in the project plan. Fortunately, slack elsewhere in the schedule prevented this from becoming a project-endangering episode.

Returning once again to our example, suppose that you become aware of serious quality problems in the early prototypes delivered by the custom development organization. You can no longer trust their testing. There’s not much point in managing a test process that is clearly broken. There’s no time remaining in your schedule to go in and fix their testing process. So, if you intend to stick with this vendor, you’ll need to start a serious testing effort to take over where they’ve failed.

Suppose you become aware of similar problems with the database vendor. You can confront the vendor with the problems. But if they delivered something to you with the assertion it would work, can you really trust them to resolve the problems now? Would they be likely to let you manage their testing? If you try to do the testing yourself, do you think they’ll fix the problems you find? If the component isn’t essential, you’re best off omitting it, or if it is replaceable, you’re best off replacing it.

Whether for a COTS component or a custom-developed component, these are clearly nasty scenarios, and at some point you’d have to ask yourself how you managed to get into such trouble. If you ran acceptance tests on a COTS component, why weren’t the problems identified?

If you thoroughly vetted your custom developer, why did they prove incompetent? How should your quality risk-mitigation strategy for outsourced components change for future projects? These are good questions, and should be saved for the project retrospective. During the project, the focus must remain on achieving the best possible outcome.

Implications, Considerations And Success

All of these options can carry serious political implications. Should problems arise, the vendor is unlikely to accept your assertion that their testing staff is incompetent or their quality unacceptable.

They might well attack your credibility. If a senior manager made the choice to use that vendor—and it might been an expensive choice—that person might side with the vendor against your assertion.

So, you’ll need to bring data to the discussion about these strategies if the triggering conditions arise during the project.

Better yet, if you’re dealing with a custom-developed component, see if you can influence the contract negotiations up front to require the vendor to submit their tests and their test results, along with the offer to let you perform acceptance testing by your team prior to payment.

Build sufficient contingency plans into your schedule, including an allowance for replacement of the vendor during the project if things start looking bad. Make sure the vendor understands that you’re paying attention to quality and that payment depends on delivery of a quality product on time. It’s amazing how motivational such clauses can be.

For COTS components, arrange a careful component selection process, including vendor research, talking to references and acceptance-testing using carefully designed tests. Identify alternative sources if possible. Consider the possibility and the consequence of omitting the component if it isn’t essential.

Finally, DIY

Finally, with the risks to system quality managed at the component level, it’s still possible to make a serious mistake in the area of testing. Even the best-tested and highest-quality components might not work well in the particular environment in which you intend to use them. Plan on integration-testing and system-testing the integrated system yourself.

Integration of COTS and outsourced custom-developed software is a smart choice for many organizations. It’s a trend that continues to grow as organizations gain experience with it.

To ensure success on your next integration project, consider the factors that create quality risk in such scenarios. Select strategies that mitigate those risks. Build risk mitigation and contingency plans into your project plan.

If you do these things and execute the project carefully, with an eye on testing and quality, you can control the risks and reduce the likelihood and impact of component quality problems.

About the Author

Rex Black – President and Principal Consultant of RBCS, Inc

Rex Black – President and Principal Consultant of RBCS, Inc

With a quarter-century of software and systems engineering experience, Rex specializes in working with clients to ensure complete satisfaction and positive ROI. He is a prolific author, and his popular book, Managing the Testing Process, has sold over 25,000 copies around the world, including Japanese, Chinese, and Indian releases. Rex has also written three other books on testing – Critical Testing Processes, Foundations of Software Testing, and Pragmatic Software Testing – which have also sold thousands of copies, including Hebrew, Indian, Japanese and Russian editions. In addition, he has written numerous articles and papers and has presented at hundreds of conferences and workshops around the world. Rex is the immediate past president of the International Software Testing Qualifications Board and the American Software Testing Qualifications Board.